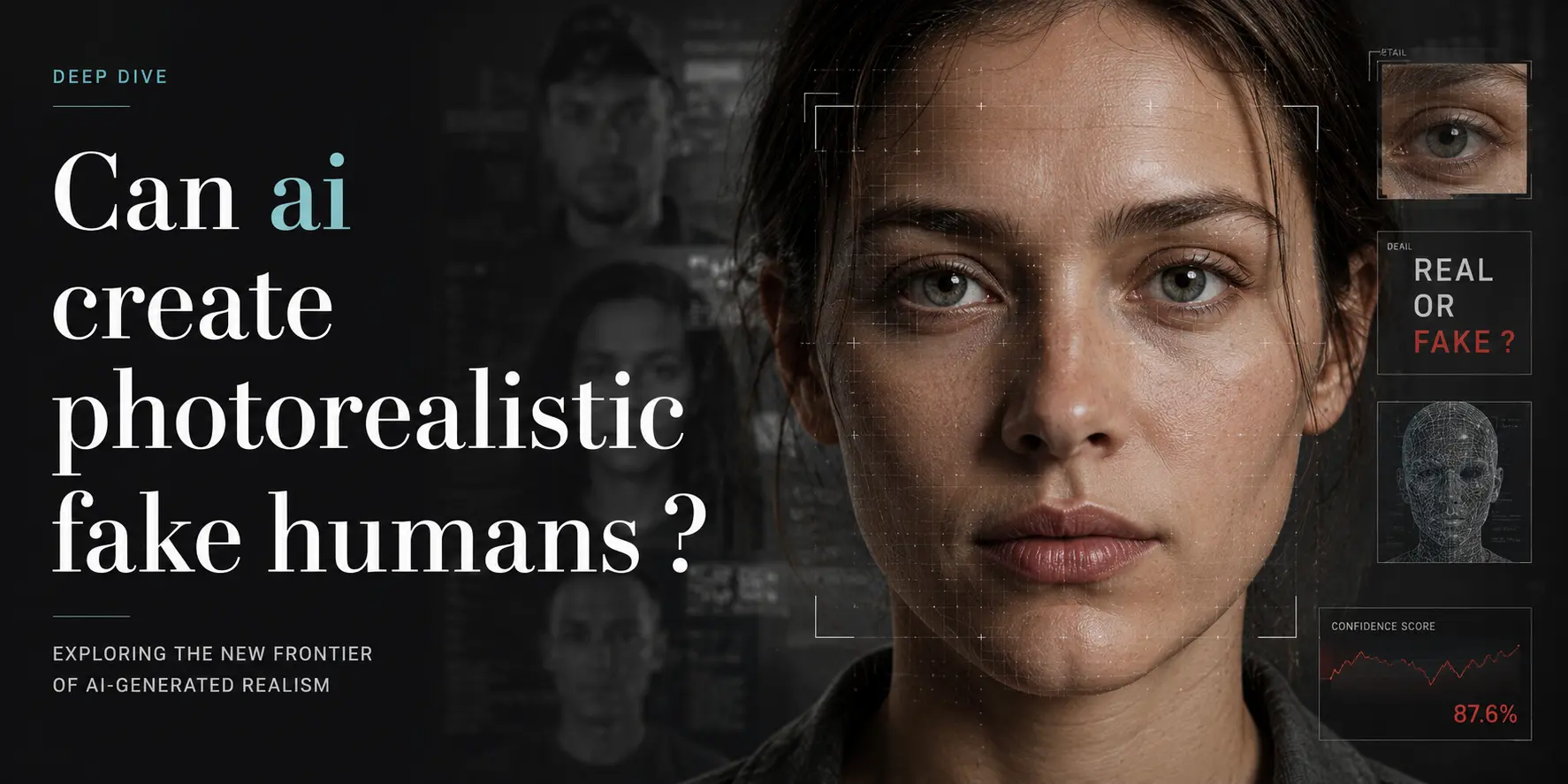

The face in the crowd that isn’t human

On a Tuesday in March 2024, a jet-blue LinkedIn post landed in my feed with a headshot of a woman whose keen gaze seemed to judge my choice of morning coffee. She had the subtle glow of natural window light, a faint freckle below her right eyebrow, and a wisecrack’s smirk—not the usual polished corporate stock photo. A reverse-image search returned nothing. Her name was “Elena K.” and, according to the bio, she led AI ethics at a stealth Berlin startup. When I messaged the company, the receptionist replied, “We don’t have anyone by that name.”

"The first time a model fooled more than half of human judges was April 2019—StyleGAN’s faces crossed the threshold without anyone noticing."

State of the art: pixels that forget they’re pixels

Today’s best models erase the seams between pixels and pores. NVIDIA’s StyleGAN3 (2021) and EdEdit (2023) synthesize 1024×1024 faces that, in psychophysical tests, fool 45–55 % of viewers—just above chance but below the 50 % threshold that psychologists consider “human-level” deception. Diffusion models such as DALL·E 3 and Stable Diffusion XL push realism further by letting users prompt attributes like “subtle acne scars, Rembrandt lighting, 45-degree head tilt.” Released benchmarks show that human evaluators misclassify AI-generated portraits as real 61 % of the time when the images are shown for only 150 milliseconds—shorter than a glance.

Where the systems still stumble is anatomy at the joints: fingers often fuse into sausages, ears detach like wingdings, and teeth become a monochrome grille. Video is even harder; Sora (Feb 2024) can generate 60-second clips of “people” walking, but blink rate is off, skin pores flicker like strobe lights, and the left hand usually copies the right.

Key milestones: the uncanny valley sprint

- December 2017 — ProGAN (NVIDIA) scales GANs to 1024×1024 faces. Early viewers report “creepy doll” vibes.

- October 2018 — StyleGAN debuts. Faces now have pores, wrinkles, and asymmetric lighting. Reddit users nickname the outputs “magic avatars.”

- April 2019 — StyleGAN’s faces reach the 50.0 % fooling rate in a controlled study by the University of Washington. Researchers quietly update the benchmark paper’s title mid-review: “The Unreasonable Effectiveness of Deepfake Faces.”

- February 2021 — StyleGAN3 introduces equivariance, letting faces rotate without the “plastic” sheen of earlier models. Celebrities start noticing their doppelgängers licensing themselves for ads.

- March 2023 — Adobe Firefly ships with a generative-fill tool that can insert a plausible-looking coworker into a corporate headshot—complete with matching shirt texture.

- February 2024 — ElevenLabs releases a voice-cloning API; a month later, a viral TikTok shows a synthetic “CEO” announcing layoffs in the founder’s voice and face, cloned from a single earnings-call clip.

The human angle: who wins, who scatters

For casting directors, photojournalists, and model agencies, the new capability is simultaneously a gold rush and an identity crisis. Recruiters now interview candidates via AI-generated stand-ins for initial screenings; one HR platform reports a 30 % drop in no-shows because synthetic avatars never cancel last-minute. Yet the same tool lets authoritarian regimes produce “evidence” of fabricated dissidents attending protests, seeding doubt faster than fact-checkers can debunk.

"Each 0.1 % improvement in fooling rate doesn’t just move pixels—it redistributes risk from platforms to publics."

Artists who once sold stock portraits see royalty streams evaporate overnight. Meanwhile, synthetic-face startups raise seed rounds on the premise that every human life can be cloned into a brand ambassador. The losers are the trust infrastructures: journalism schools add deepfake detection labs, border agencies deploy liveness tests, and families learn to wave a hand in front of holiday Zoom calls.

What’s next: the next 12–24 months

Expect two tracks to accelerate in parallel. First, fidelity arms races: Stable Diffusion 3 (scheduled Q3 2024) promises 4K-resolution faces with stable hair strands and incisors that actually look like teeth. Second, watermarking and provenance standards will harden; Adobe’s CAI (Content Authenticity Initiative) already embeds cryptographic hashes in generated portrait EXIF, and the EU AI Act (enforced mid-2025) will require disclosure for “realistic” synthetic humans.

In video, Runway Gen-3 and Pika Labs are iterating on 1080p “talking head” clips that can lip-sync to any script in under five minutes. The gap between “uncanny” and “unremarkable” is shrinking, but the ultimate hurdle remains consistency: a 30-second clip still betrays itself within two or three blinks.

Ethicists predict a surge in “face-doubling” protection services—apps that let users upload a selfie and generate an entire synthetic media kit for secure communications. Meanwhile, romance scammers already use these models to clone the faces of celebrities and soldiers, banking on the human reflex to trust a pretty smile.

Reflection: the mirror we’ve broken

We used to worry about Photoshop airbrushing a wrinkle away. Now Photoshop can airbrush an entire identity into existence. The milestone wasn’t February 2019 when the model fooled half of us—it was the moment we stopped caring which half we belonged to. The real question isn’t whether AI can make photorealistic fake humans, but how many of us will still bother to look.