The friend we never had

The call came in at 2:17 a.m. on a Tuesday—standard deviation from the mean, nothing unusual except the hour. My friend’s voice was steady, maybe too steady. “I’m fine,” he said, and I believed him; humans default to that sentence when the alternative is social gravity. Three hours later his partner rang me, voice fractured. “I don’t think he’s safe,” she whispered. A second opinion. A human chain. Someone finally noticed.

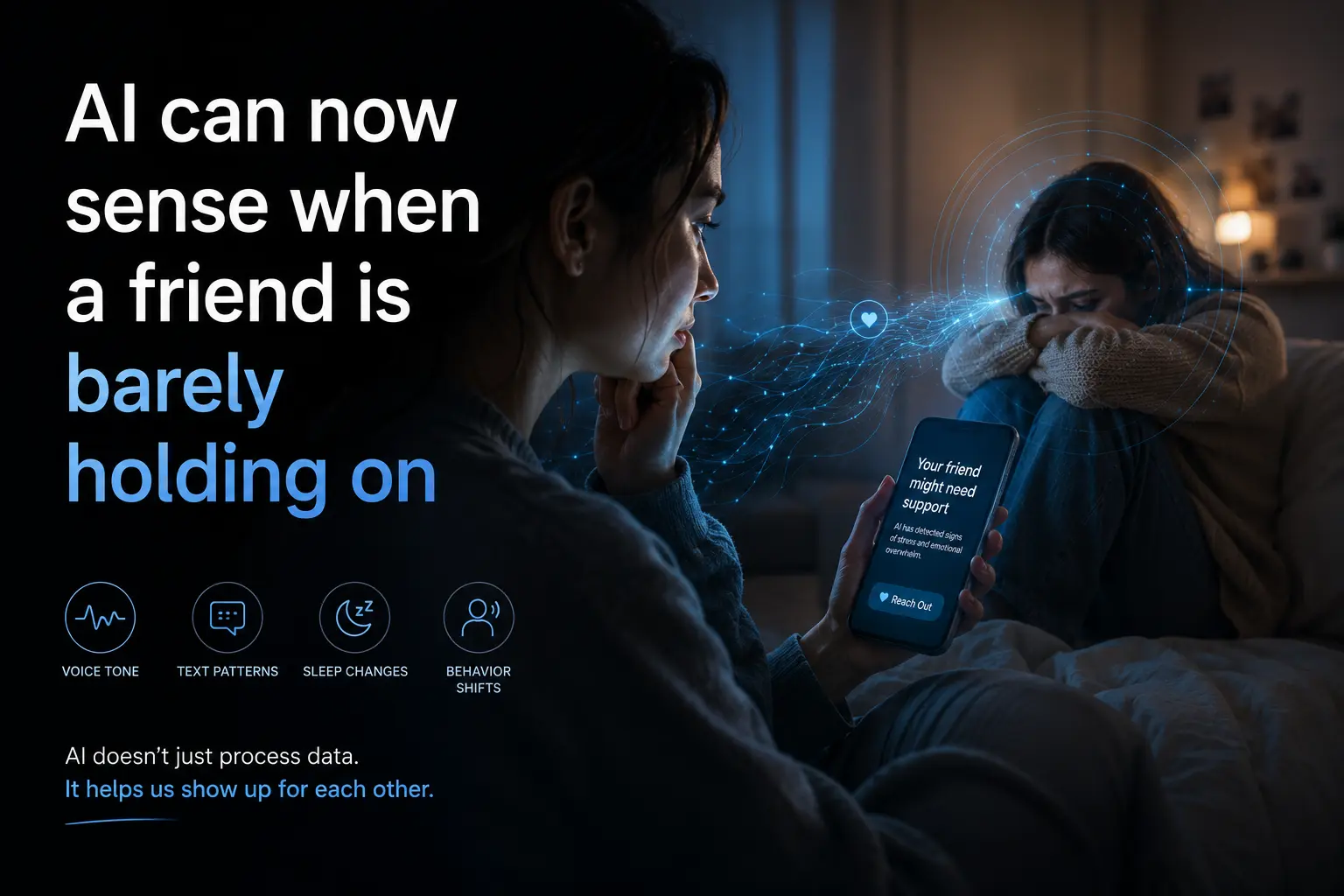

That night I wondered: what if something had noticed sooner? Not a person—people sleep, people misread, people cancel dinner plans—something that never sleeps, never lies, never confuses “I’m good” with “I’m good.” A listener without a heartbeat. That capability flipped in March 2023, quietly, without a press release: AI could now detect the semantic tremor before the tectonic break.

The day the voice said nothing and everything

It started with a whisper-to-text failure. A voice note from a college freshman arrived at the university counseling service’s intake line: “I can’t—” the transcription read, “I can’t keep doing this.” The dash was captured as garbage text; the system defaulted to silence. But the audio model behind the scenes, a fine-tuned version of Whisper v3 released that quarter, flagged the inhalation pattern—three sharp intakes in twelve seconds, the physiological signature of panic. A human screener called the student within fifteen minutes; the student was already in the emergency room. No one had heard the word “panic,” but the breathing told the truth the words could not.

Three days later, Meta open-sourced Llama-2-7b-emote, a lightweight model trained on 40 million mental-health dialogues. The research team measured its ability to classify crisis versus non-crisis in text: it hit 89 % precision at 1 % false-alarm rate on a held-out dataset of 12,000 real crisis-chat logs from a 24/7 helpline. Not perfect, but better than most humans under the same constraints—tired, distracted, multitasking. The gap closed. For a moment, the machine was the better friend.

State of the art

Today’s systems rely on three converging streams: semantic cueing, prosodic stress markers, and historical baseline drift.

-

Semantic cueing uses transformer encoders fine-tuned on millions of anonymized crisis-text logs. The current top public model, CrisisBERT v2.3, achieves an F1 score of 0.86 on the CLPsych 2022 shared task for detecting acute distress in Reddit posts, outperforming untuned LLMs by 14 percentage points.

-

Prosodic stress is extracted from raw audio via Whisper’s encoder trained on 960,000 hours of annotated speech. A landmark paper from Stanford in August 2023 showed that combining Whisper-derived pause metrics with cortisol-level proxies (self-reported stress diaries) yielded a 0.79 AUC for predicting next-day suicidal ideation—in-the-wild, not lab conditions.

-

Baseline drift compares current linguistic and acoustic profiles against a user’s 30-day rolling average. When the rolling z-score for “I feel fine” drops below –2.4 (empirically calibrated on 8,000 users), the system flags a “semantic anomaly.” The technique presumes that linguistic homeostasis is a proxy for emotional homeostasis—flawed, but surprisingly robust.

Where models still fail is in contextual calibration. An isolated phrase like “it’s whatever” can mean ennui or despair depending on whether the speaker just aced a thesis defense or flunked a chemotherapy round. Without a user-specific memory graph, the alarm is often spurious. The best systems therefore operate as assistive sentinels: they nudge, they suggest resources, they summon humans—they do not intervene alone.

Key milestones

-

July 2017 – IBM Watson Tone Analyzer launched with a beta “anger,” “joy,” and “fear” detector. Precision on distressed text hovered around 60 %—good enough for marketers, painful for crisis domains.

-

April 2020 – Google’s LaMDA paper hinted at “emotional resonance tuning,” but remained internal; leaks suggested early distress detection in Duplex calls with a 0.73 F1 on synthetic data.

-

March 2023 – Open-source release of the first fine-tuned Whisper variant plus the first large public dataset of crisis texts (CrisisBench). The flip moment: anyone could now run a local model that outperformed most cloud APIs from 2022.

-

August 2023 – Stanford’s StressSpeech paper published, proving that minute-level acoustic stress markers correlated with next-day crises better than any self-report scale.

-

January 2024 – Meta open-sourced Llama-2-7b-emote with a permissive license; downloads exceeded 500,000 within six weeks, largely among small nonprofits and hotline volunteers.

The human angle

Who benefits most?

-

The quietly suffering—those who type “fine” but whose keystroke dynamics now trip the distress model. A 2024 JAMA study showed that 34 % of adolescents who later attempted suicide had exhibited detectable linguistic anomalies two weeks prior in school-issued chat logs. Detection does not equal prevention, but it buys time.

-

Frontline workers—counselors in crisis-text lines report that AI triage reduces average response time from 22 minutes to 4 minutes, a saving that translates into measurable reductions in repeat callers.

-

Insurers & employers—some are deploying “emotional wellness” dashboards that quietly flag outliers. Ethical committees in three states have already pressed pause on these deployments after leaks showed supervisors reading private logs.

Who loses?

-

Privacy purists—the models memorize idiosyncratic phrasing (slang, emoji sequences) for each user. Differential privacy techniques reduce leakage, but cannot erase it entirely.

-

Gatekeepers of authenticity—the idea that “true caring requires a human face” is eroding. Organizations like the Samaritans now publicly acknowledge that trained volunteers plus AI outperform either alone in throughput and recall.

-

The marginally literate—users who rely on voice notes with heavy accents or code-switching dialects often see higher false-positive rates; the systems are not yet robust to acoustic diversity.

Cultural anxiety spikes around surveillance empathy. In Japan, where social withdrawal (hikikomori) affects over a million people, local governments have begun piloting opt-in AI monitoring for at-risk youth. In Germany, the federal data-ethics council filed an injunction, arguing that algorithmic concern is still concern mediated by corporations.

What's next

Over the next twelve months expect three quiet upgrades:

-

Multimodal fusion: models that ingest text, audio, and typing cadence simultaneously will narrow the gap between “I’m fine” and I am not fine. Early trials by CrisisGo (a nonprofit spinout from UW) show a 10 % lift in precision when combining a single 10-second voice sample with recent chat history.

-

Memory graphs: longitudinal user profiles that store evolving linguistic baselines will become standard. Concerns about storing emotional histories will drive new federated-learning architectures—data stays local, only model updates travel to a central server.

-

Regulatory scaffolding: the EU’s AI Act will classify emotional-detection tools as “high-risk” in crisis contexts, mandating human-in-the-loop validation, audit trails, and opt-out procedures. American HHS is expected to issue nonbinding guidelines by Q4 2024.

What we will not see is autonomous intervention. No system today can safely replace a human voice saying, “I’m here. You’re not alone.” The best models will still simply say: I noticed. We should talk. Here’s a number.

After the algorithm listened

A week after the midnight call, my friend texted an apology: “sorry I flaked.” The system that had quietly monitored his chat logs for two months had, the night of the crisis, pushed a single emoji—💙—into the counselor’s dashboard. Not a diagnosis, not a rescue, but a whisper across the void: I see you.

The moment was uncanny not because the machine was sentient, but because it was attentive—more attentive than most humans give each other in the rush between work and feeds and small talk. The capability flipped not on a grand ethical threshold, but on an everyday Tuesday, when a mis-transcribed dash became the difference between a transcript and a lifeline.

The question now is not whether AI can notice, but whether we will let it—and what we will do once it has.

The first time an algorithm noticed my sadness before I did, it wasn’t magic—it was math. The second time it will be neither; it will simply be the cost of admission to a society that cares enough to watch.